NVIDIA Opens MRC Networking Standard to All, Boosting AI Factory Performance

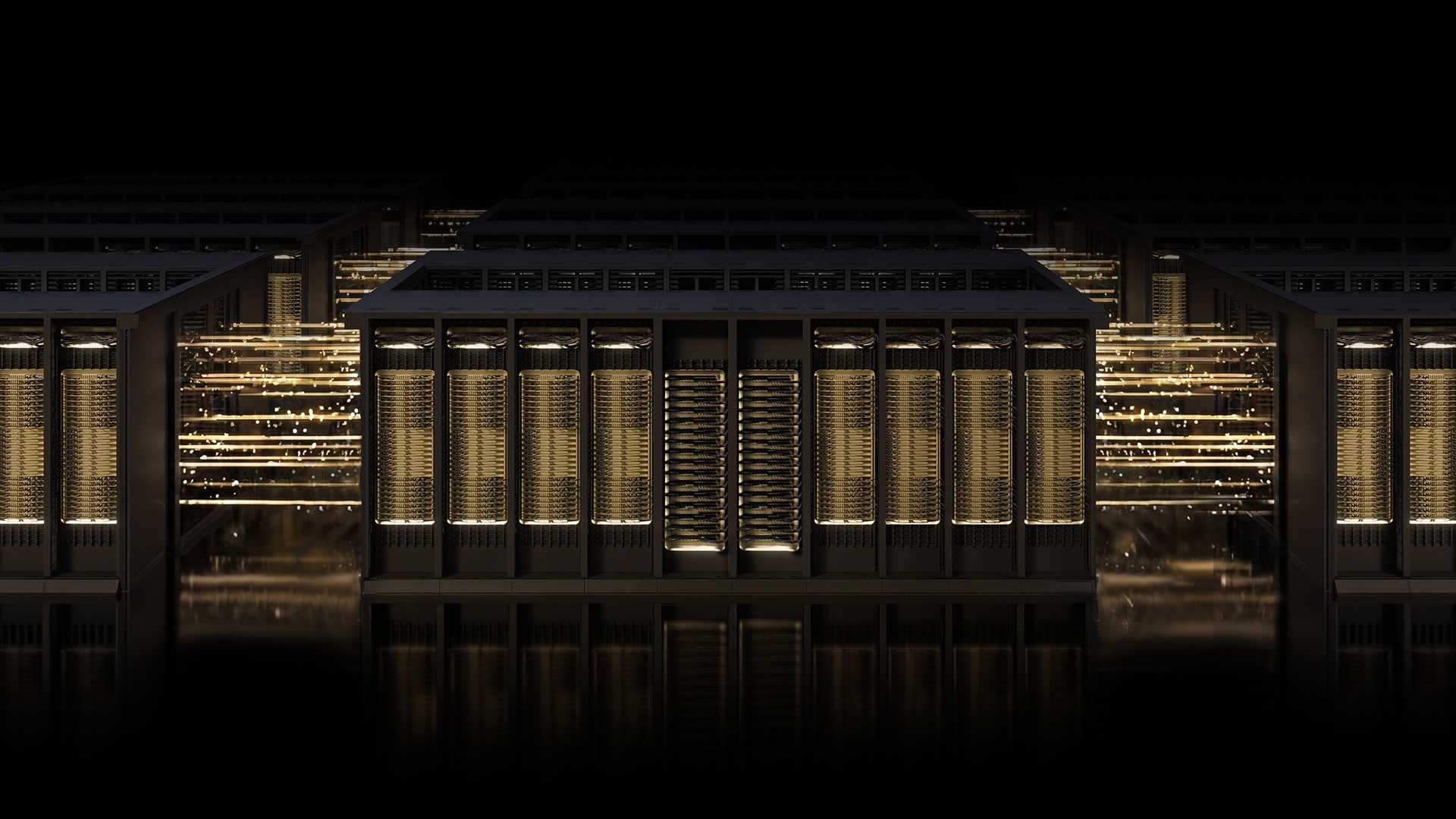

NVIDIA today announced that its Multipath Reliable Connection (MRC) protocol, a key component of the Spectrum-X Ethernet platform, is now available as an open specification through the Open Compute Project (OCP). This move aims to accelerate the development of gigascale AI factories by enabling any organization to adopt advanced networking techniques previously limited to NVIDIA's hardware ecosystem.

MRC allows a single RDMA connection to distribute traffic across multiple network paths simultaneously, dramatically improving throughput, load balancing, and availability for large-scale AI training clusters. The protocol has already proven effective in production environments, including OpenAI's frontier training runs and Microsoft's Fairwater data center.

“Deploying MRC in the Blackwell generation was very successful and was made possible by a strong collaboration with NVIDIA,” said Sachin Katti, head of industrial compute at OpenAI. “MRC’s end-to-end approach enabled us to avoid much of the typical network-related slowdowns and interruptions and maintain the efficiency of frontier training runs at scale.”

NVIDIA Spectrum-X Ethernet infrastructure, which powers MRC, is used by industry leaders including OpenAI, Microsoft, and Oracle. The technology is specifically designed for the demands of AI factories—massive data centers purpose-built for training and deploying leading-edge large language models.

Background

Modern AI training requires networking that can handle enormous data volumes with minimal latency and zero tolerance for interruptions. Traditional Ethernet fabrics often suffer from congestion, packet loss, and inefficient load balancing, leading to GPU idle time and slower model convergence.

NVIDIA developed MRC to address these challenges. Think of it as replacing a single-lane road with a street grid and a real-time traffic app: when one path becomes congested, traffic is dynamically rerouted to avoid slowdowns. The protocol works in concert with Spectrum-X's purpose-built hardware, deep telemetry, and intelligent fabric control.

“Collaboration across the ecosystem is essential for advancing AI infrastructure,” said a NVIDIA spokesperson. “By open-sourcing MRC, we enable broader innovation and accelerate the path to gigascale AI.”

Microsoft and NVIDIA have a long-standing partnership on next-generation AI infrastructure. Microsoft’s Fairwater facility and Oracle Cloud Infrastructure’s Abilene data center—two of the largest AI factories ever built—rely on MRC to meet stringent performance, scale, and efficiency targets.

What This Means

The open release of MRC means that any cloud provider, enterprise, or research institution can now implement the same advanced networking protocol used by the world's most demanding AI workloads. This could lower barriers to entry for building large-scale AI training environments and foster a more competitive ecosystem.

Key benefits of MRC include:

- Higher GPU utilization – by load-balancing traffic across all available paths, every GPU gets the bandwidth it needs throughout a training run.

- Sustained high bandwidth – even under congestion, the protocol dynamically avoids overloaded paths in real time.

- Intelligent retransmission – when data loss occurs, rapid, precise recovery minimizes the impact of short-lived interruptions on long-running jobs, helping avoid GPU idle time.

- Operational visibility – administrators gain fine-grained control over traffic paths, simplifying troubleshooting and operations.

With MRC now an open standard, the networking industry can build compatible solutions, potentially accelerating the pace of AI innovation. The protocol is expected to become a cornerstone for future Ethernet-based AI fabrics, enabling more efficient scaling of models beyond current limits.

NVIDIA will continue to optimize MRC within its Spectrum-X platform while engaging with the OCP community to drive further enhancements. The company emphasizes that the protocol's success in production—particularly at OpenAI and Microsoft—demonstrates its readiness for widespread adoption.

As AI models grow larger and training runs become more complex, the need for resilient, high-performance networking becomes critical. MRC's open specification marks a significant step toward meeting that need, setting a new standard for how AI factories communicate internally.

Related Articles

- How to Discreetly Track a Vessel Using a Mailed Bluetooth Tracker

- Apple Raises Mac Mini Price: Entry-Level Model Discontinued Amid Chip Constraints

- How to Discreetly Embed a Bluetooth Tracker in a Postcard for Mail Tracking

- Ancient Spanish Mines Uncovered: Solving Scandinavia's Bronze Age Metal Mystery

- AdGuard VPN Long-Term Plan: Answers to Your Top Questions

- Amazon Expands Logistics Empire: New Supply Chain Service Opens Its Network to Businesses

- Man Pages Get a Major Overhaul: Developers Propose Cheat Sheets and Organized Options to End Confusion

- 7 Reasons Why Last Year's Razr Ultra Beats the New Model for Half the Price